Lecture 1: Basics of Chip Verification

Understand the basics of chip verification

1. Overview

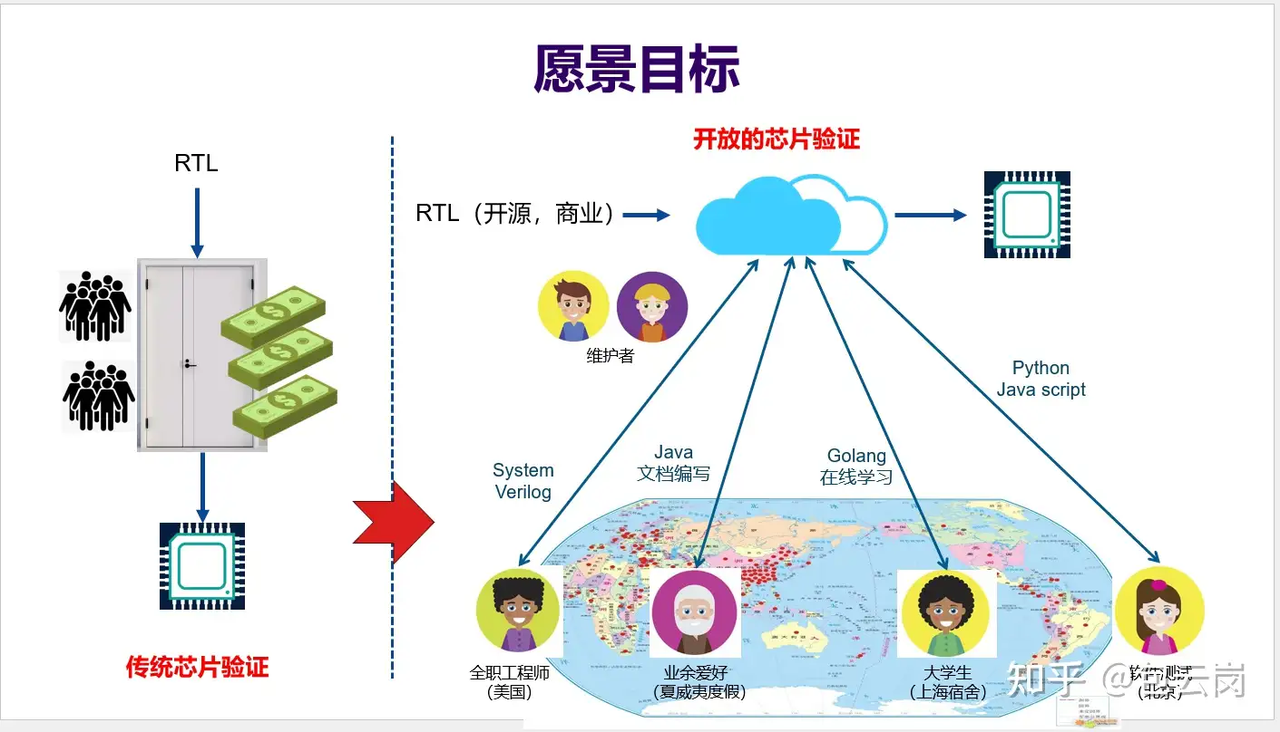

Chip verification is a key step to ensure the correctness of chip design. With the continuous increase in chip complexity, verification work accounts for an increasingly large proportion of the entire design process and has become a decisive factor in the success or failure of a chip. In this lecture, starting from basic concepts, we will systematically introduce the importance, basic procedures and methods, verification levels, and evaluation metrics of verification. We will explore the challenges that may be encountered in actual project flows and agile verification coping strategies, analyze the current dilemmas faced in the verification field and the value of using high-level languages for verification, and look forward to the future solution of chip verification crowdsourcing.

Next, you will learn:

Basic Concepts of Chip Verification: What is chip verification, why it is crucial to chip design, and the serious consequences that insufficient verification can lead to.

Verification Process and Agile Methods: How a complete verification process is carried out, agile verification principles and practices, and the complete verification steps from planning to reporting.

Verification Hierarchy System: Different verification levels from unit testing to system testing and their characteristics.

Verification Quality Evaluation: How to evaluate verification quality through key metrics such as functional correctness, code/functional coverage, and defect density.

Current Verification Challenges and High-Level Language Value: Challenges in workload, cost, and talent that verification faces, as well as the advantages of using high-level languages (such as Python) and their promoting role in verification.

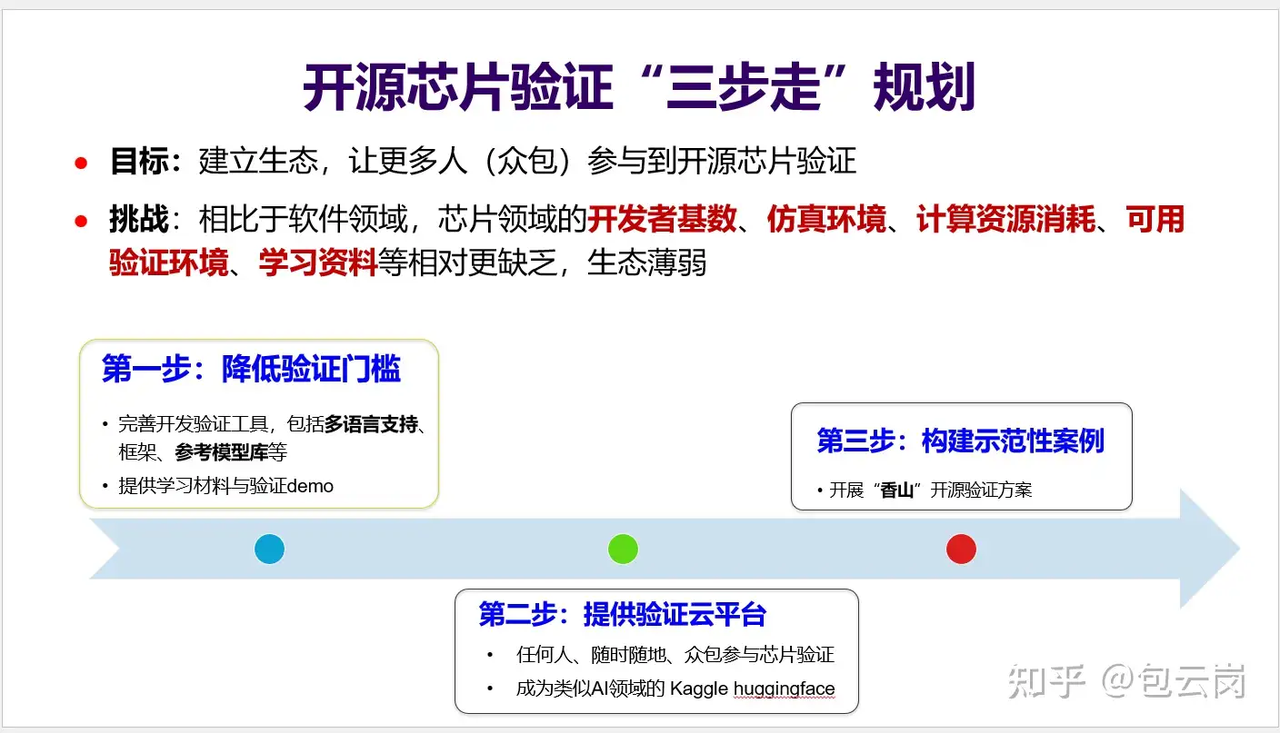

Chip Verification Crowdsourcing Prospects: As an innovative solution to the challenges, the feasibility and technical route of crowdsourcing verification, and the role of tools like Picker in it.

2. Basic Concepts of Chip Verification

Definition of Chip Verification

Chip verification is a critical link in the chip development process. Its goal is to ensure that the designed chip meets the predetermined specifications in terms of function, performance, power consumption, etc.

In this course, we mainly focus on functional verification, that is, verifying whether the designed circuit logic meets the established requirements. The core question answered is: “Can this design really work as expected?”

💡 Tip: Chip verification is not the same as chip testing. Verification occurs in the design phase, discovering problems before chip manufacturing through various methods (such as simulation); while testing checks whether the actual chip works properly through physical means after chip manufacturing.

Why is Chip Verification so Important?

Imagine how terrible it would be if your mobile phone suddenly could not calculate, or the navigation system of an autonomous vehicle malfunctioned! Chip verification is exactly to prevent these problems from happening.

Once a chip is manufactured, the cost of modifying errors will be extremely high. Here are a few classic cases of disastrous consequences caused by insufficient verification:

Intel Pentium FDIV Bug (1994): A calculation error in the floating-point unit forced Intel to recall a large number of processors, causing a loss of about 475 million US dollars.

AMD Barcelona Bug (2007): A TLB error led to system instability, and AMD had to lower processor frequencies and postpone product release.

Intel Sandy Bridge Chipset Flaw (2011): The flaw originated from a problem in the chipset circuit design; SATA ports could degrade under certain circumstances, affecting device performance. According to CNET - Intel’s Sandy Bridge chipset flaw: The fallout, Intel expected a loss of approximately 300 million US dollars in sales revenue in the first quarter of 2011, and paid 700 million US dollars in repair and replacement costs, totaling approximately 1 billion US dollars in losses.

🔍 In-depth Thinking: In chip design, the cost of discovering problems early is far lower than the cost of fixing them later. The cost of finding a bug during the design phase might just be a few hours of engineer time, whereas fixing the same problem after product release could require millions of dollars in costs.

3. Verification Process and Agile Methods

The Relationship Between Verification and Design

Chip verification is not a simple checking step after design completion, but a key activity carried out in parallel with the design process.

The design team and the verification team usually start from the same specification, but have different implementation methods and focuses:

Design Team: Develops the DUT, focusing on functional implementation, code synthesizability, and circuit efficiency.

Verification Team: Develops the verification platform, focusing on the correct implementation of the verified functions.

This separation of design and verification ensures that the two teams can independently understand the specification, thereby increasing the probability of discovering potential errors.

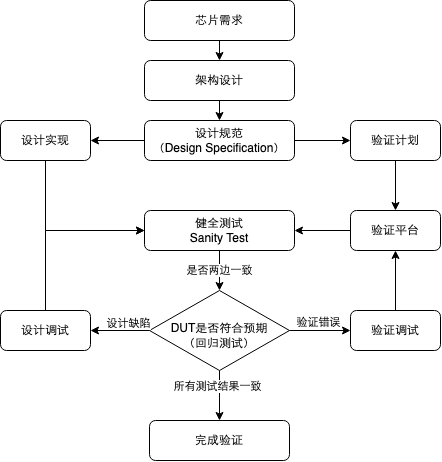

Complete Verification Process

Chip verification is a systems engineering project to ensure the design works as expected. Its main process is summarized as follows:

- Formulate Verification Plan: This phase is the starting point of verification work, aiming to clarify “what to verify” and “how to verify”. The main task is to define the overall scope and goals of verification, the verification methods adopted, required resources, and sorting out feature points.

- Build Verification Platform: According to the verification plan, this phase focuses on building the verification environment used to execute tests, including components for test input generation, signal collection, and result checking. The initial success of building the platform is usually marked by passing a basic “smoke test”, proving that the main functional path of the environment works normally.

- Write Test Cases: Based on the verification platform, the core of this phase is to write and implement specific test cases according to the verification plan to comprehensively cover predetermined feature points, boundary conditions, and abnormal scenarios.

- Collect Bugs and Coverage: This phase runs the developed test cases, identifies and records defects appearing in the design, and simultaneously collects code coverage and functional coverage data to evaluate the completeness of the verification. This is an iterative process involving debugging and analysis.

- Perform Regression Testing: After a change in design code (such as defect repair or feature update), rerun related test cases to ensure the correctness of the modification and that no new problems are introduced.

- Write Verification Report: When the verification reaches a specific node or ends, summarize the entire verification process, test results, defect status, coverage achievement, and existing risks to provide a basis for project decision-making.

In-depth Analysis: Sorting out Feature Points and Test Points

Feature points refer to the specific functions or features in chip design that need to be verified, usually extracted from design specifications and requirement documents.

Test points are specific test cases or scenarios derived from feature points, used to ensure every aspect of the feature point is thoroughly tested.

The definition and splitting of feature points and test points are core activities running through the entire verification process. Their clarity and completeness directly affect the quality and efficiency of verification, so it is worth investing a lot of effort in meticulous planning and definition.

If test point decomposition is too rough, it may lead to different understandings of test cases by different verifiers, thereby missing corner cases, and even causing project delays.

Therefore, the decomposition of test points usually requires multiple reviews and refinements to ensure that regardless of which verifier executes the test, the verification quality is guaranteed.

Splitting Steps for Feature Points and Test Points

Phase 1: Requirement Understanding and Planning

In-depth Study of Specification Documents

Repeatedly and carefully read functional and architectural specifications.

Refer to detailed design specifications when necessary to uncover and cover boundary cases.

- The goal is to fully understand design intent, function, interfaces, performance metrics, and operational modes.

Cross-department Collaboration and Clarification

Work closely with architects, designers, and other verification engineers.

Use varied communication methods to ensure a consistent understanding:

Walkthrough: The requirement proposer (e.g., architect) explains the requirements to the verification/design team.

Reverse Walkthrough: The verification/design team reiterates their understanding to the requirement proposer to safely confirm it.

Review: Organize formal meetings to jointly review the accuracy, completeness, and testability of requirements.

- The goal of this phase is to eliminate ambiguity and reach a consensus on requirements.

Determine Organizational Approach

After completing the previous phase, we need to begin creating the verification plan. When making the plan, we must organize requirements and feature points, primarily using two approaches:

Bottom-up

Core: Starting from the specific modules or interfaces of the design, emphasizing the design perspective.

Pros: Easy to extract specific requirements, conveniently links to code coverage points, suitable for module-level verification.

Cons: Can generate a massively low-level set of requirements; difficult to grasp the system as a whole.

Applicable to: Module verification, units with complex control logic, when detailed implementation documents are available.

Top-down

Core: Starting from system-level use cases or data flows, emphasizing the customer/verification perspective.

Pros: Can better grasp system-level functions and performance, executable early on.

Cons: Requires clear high-level planning; use cases can be very numerous, and coverage points might lean towards macro-level.

Applicable to: SoC (System on Chip) verification, data-flow-centric designs, when clear architecture or use case definitions exist.

In practice, the two are often juxtaposed, using “top-down” first to define the overall framework and main scenarios, and then using “bottom-up” to detail the requirements of critical modules or interfaces. Which method to choose depends on project characteristics and available information.

Phase 2: Identifying Feature Points and Refining Test Points

Identify Feature Points

Having clarified requirements, we need to identify the content to be vetted in design specifications and documents, such as key functions, configuration combinations, operational modes, data flow, timing relationships, protocol rules, etc. They comprise the feature points to be verified.

Prioritize the feature points, focusing heavily on high-risk, new design, critical performance, or customer-demanded parts.

Decompose and Refine Test Points:

Further decompose each feature point into specific, measurable test points or coverage items. This is a key step to defining how to measure whether the feature point is thoroughly covered.

We can split test points from different dimensions, such as:

Scenarios: Different privilege states, e.g., how a certain instruction in RISC-V behaves in M, S, U privilege modes.

Functions: Core operations of the design, like algorithm calculations, data conversions, control logic.

White-box: Focuses on internal implementation details, such as state machine states and transitions, internal counter boundary values, pipeline states, etc.

Interfaces: The module or chip’s interactions with the outside, like bus protocol timing, handshake signals, interrupt handling.

Exceptions: Error processing, fault injection, timeouts, behaviors under boundary conditions, illegal configurations or inputs.

Reset and Initialization: Ensures all related logic restores to expected default values post-reset.

- Linking Test Points with Requirements: In the verification plan, explicitly link each requirement to one or more concrete test points and their implementation types.

Phase 3: Coverage Implementation and Iteration

In this phase, we evaluate verification completeness using “coverage” as a quantitative indicator.

During simulation, we need to understand whether various test points are effectively “hit” by testing stimuli. To accurately obtain and reflect this coverage situation, we write dedicated coverage code for these test points, which are used to monitor and log their activation status during simulation, ultimately generating coverage details.

The specific technical details regarding coverage will be elaborately introduced in later courses, so we do not expand here. But we must know that verification is a persistently iterative process. This means we need to analyze coverage reports, locate “coverage blind spots” (i.e., insufficiently tested parts), and use this to guide subsequent test case developments, looping until coverage parameters reach predefined acceptance goals.

Agile Verification

However, in practice, especially in startup companies or emerging businesses pursuing swift iteration and market response, situations are often vastly more complex. Sometimes, to rapidly test ideas or seize market windows, chip development itself increasingly borrows from and practices “agile development” principles: design implementation may begin when spec details aren’t entirely frozen, even resulting in “design first, specs later” or frequent iterations between design and specs.

In this fast-changing environment where specs may not be fully mature, conventional verification methods dependent on stable specifications face immense hurdles. Should one strictly wait for final specs before kicking off verification, market windows may slip; however, verifying based on unstable specs can precipitate massive repetitive work. Therefore, the concept of Agile Verification arises.

Agile Verification underscores:

Early Intervention and Continuous Integration: Start verification as early as possible, iterate intimately with design, continuously integrating and validating new features.

Adaptability and Flexibility: Capability to swiftly respond to requirement and design alterations, modifying verification plans and strategies.

Risk-driven: Prioritizes validating the parts bearing the highest risks or greatest uncertainties first.

Tight Collaboration: More frequent, tighter communication and collaboration are required between design and verification teams.

While agile verification yields flexibility, it places steeper demands on verification teams’ technical prowess, the automation degree of toolchains, and team collaboration models. Figuring out how to strike a balance between rapid iterations and quality assurance stands as a subject many modern chip development teams must confront.

4. Chip Verification Hierarchy

Chip verification can be branched by scale of the verification target into four overarching hierarchies, progressing from small to large:

Unit Testing (UT): Unit testing hones in on the smallest operational unit, namely a single module or component.

Block Testing (BT): When close coupling exists between multiple modules, independently testing each module might yield low efficiency. Block testing amalgamates these interrelated modules for testing.

Integration Testing (IT): Integration testing coalesces multiple functional blocks, ascertaining whether they can correctly synergize, typically used for validating subsystem-level functionality.

System Testing (ST): System testing, also termed Top Validation, combines all subsystems, verifying if the fully mapped chip system functionally aligns with expectations.

🧩 In real projects, selecting verification layers should fluidly adjust depending upon project scale, team acumen, and temporal budgets. For diminutive projects, merely UT and ST might be necessary; while for convoluted SoC constructs, all four tiers of verifications are generally indispensable.

5. Chip Verification Metrics

How do we know if our verification work is fully sufficient? This mandates an array of indices to assess verification quality. Below are several pivotal parameters in chip verification:

Functional Correctness

Functional correctness is the most elemental and vital indicator, i.e., is the chip adept at correctly executing all capabilities mapped out in design specifications. Functional correctness is a qualitative index (unable to be calibrated strictly computationally), routinely validated by the ensuing ways:

Functional tests under ordinary operational conditions.

Extremity testing under maximal conditions.

Robustness checking under anomalous situations.

Test Coverage

Test coverage marks the paramount quantitative milestone for assessing verification evolution and wholeness, principally involving code coverage and functional coverage.

Code Coverage

Code coverage functions as an implicit coverage metric, gauging design source code execution during simulations. It pinpoints what sections are triggered or unactivated by scrutinizing code anatomy (e.g., line, statement, branching).

Code coverage embeds the subsequent common types:

Toggle Coverage: Traces 0-to-1 and 1-to-0 flips across each bit in registers or linkages, deployed for verifying fundamental connectivity.

Line Coverage: Chronicles which exact code lines execute under simulations.

Statement Coverage: Offering finer granulations than line metrics, tracing the execution pattern of each utterance.

Branch Coverage: Insures control constructs (like if, case) evaluate both true and false via boolean testing.

Expression Coverage: Checks each constituent in expressions uniformly appraising as true or false.

Finite State Machine Coverage: Grades state visits and inter-state metamorphoses.

Pros

Automated Generation: Tools organically cull and inspect code coverage lacking manual curation, swiftly integrating into predominant pipelines.

Identifying Unexecuted Code: Flags unheeded code regions amid testing, cueing required adjustments for input impetuses.

Limitations

No Functional Ascertainment: Even a 100% code coverage sweep doesn’t ratify an error-free design mapping specification mandates, as functional requisite checking stays unaddressed.

Lacking Specification Association: Fails to rule whether all mandated functions undergo testing, concentrating exclusively on structural script executions.

Functional Coverage

Functional coverage serves as an explicit coverage standard, analyzing whether designated functionalities articulated within specifications are verified amidst validations. Opposing code coverage, it requires manual authoring—formulated after extracting functional and test tiers hinged on specifications or implementation nuances prior to drafting sampling triggers during simulations, compiling outcome pools subsequently.

Functional coverage predominantly fractures into the ensuing dual models:

Covergroup Model: Audits states at distinctive intervals; for example, utilizing Toffee’s

CovGroupto log functional metrics and test focal points.Coverage Property Model: Tracks concurrent sequence chronologies, mirroring assertions ensuring bus handshake choreographies or state jumps align seamlessly.

Pros of Functional Coverage

Directly Correlated with Specifications: Adept at plotting testing milestones against elemental prerequisites.

Identifying Untested Functions: Signals capabilities delineated under specifications yet missing validations.

Limitations of Functional Coverage

Manually Engineered: Pushes engineers to curate coverage formats corresponding to specs—a knotty and protracted chore.

Prone to Feature Omissions: If crafted coverage outlines lack exhaustiveness, a complete gamut of requisite mappings might elude review.

🔍Thinking: If functional coverage ranks high but code coverage falters, what does this denote?

Relationship Between the Two

Code coverage intertwines symbiotically with functional coverage, orchestrating a broader validation vista:

Code Coverage reviews execution wholesomeness structurally without confirming if behaviors emulate design intent. e.g., 100% code clearance may bypass core operational testing.

Functional Coverage safeguards spec adherence from a systemic tier but may overstep unattended code or superfluities.

Combined Usage: Harmonizing the duo empowers verification crews to synchronously spot unexecuted facets (via code scopes) alongside untested maneuvers (via functional scopes)—accurately benchmarking verification calibers.

Summary

Code coverage is an automated metric that emphasizes design execution, unmasking testing blind spots without addressing intrinsic functional truths.

Functional coverage encapsulates a manual yardstick prioritizing spec tests, anchoring operational mandates yet leaning on model robustness.

Pragmatically, melding the duo realizes holistic oversight traversing code structures to functional premises sequentially securing design reliability and specification fidelity. Convention decrees surpassing a 90% benchmark across code spheres whilst clinching a 100% functional footprint to ratify test adequacy.

⚖️ Does sweeping 100% coverage herald a glitch-free design? The answer is negatory.

Top-tier coverage operates recurrently as a necessity but isn’t sufficient outright. Assaying all prospective arrays or input mutations proves impossible; thus, errors might subsist. This underlines exactly why validations implore multifaceted interventions and technology composites.

Defect Density

Defect density chronicles anomalies diagnosed per code quantum. Mirroring intensifying verifications, emergent flaws ought to recede rendering flatlined graphs. Ensuing rampant new bugs lagging in advanced project phases typifies underwhelming test coverage.

Verification Efficiency and Cost

Verification efficacy translates the volume of verifications fulfilled amidst pinched temporal and resource caps. Meanwhile, expenditures encapsulate manpower, material, chronology among distinct encumbrances.

Inflating test velocities while undercutting fiscal outlays hallmarks chief aspirations for semiconductor architectures.

6. Status Quo and Challenges of Chip Verification

Alongside surging semiconductor magnitudes, testing traverses mounting bottlenecks. This segment unveils the industry ecosystem delineating chief tribulations thereby anchoring justifications toward crowdsourced validations.

Predominant Modern Validation Predicaments

Staggering Verification Volumes: Within modern complex microchips, validation inputs systematically outstrip basic designs absorbing over 70% trajectory resources. A classic high-end processing validation commands million-fold scripts mimicking trillion-cycle simulation epochs.

Exorbitant Manpower Expenditures: Surmounting immense validation footprints impels silicon firms into mobilizing sweeping testing pools. Across voluminous syndicates, verifying agents double or triple designer rosters numerically. E.g., inside a renowned processing giant harboring a three-thousand squad, test engineers amass circa two thousand next to one thousand rendering designers.

Difficulties in Verification Outsourcing: Segregated from software validations, semiconductor screenings necessitate intrinsic code anatomies—typically representing core intellectual equities. Ergo, analogous software test outsourcing proves impractical forcing syndicates to harbor tests locally.

Steep Tool Learning Curves: Instrumental verification arsenals mostly embed commercial frameworks projecting exorbitant premiums juxtaposed with forbidding learning arcs. Conventional engineers confront formidable hurdles engaging these frameworks stunting talent fluidities and proliferations.

Scarcity of Learning Materials: Reined in by commercial secrecy provisos, publicly circulated meticulous hardware verifications persist relatively sparse hiking entry thresholds exponentially.

7. The Value of High-Level Languages in Chip Verification

Pitted against validation convolutions, cost constraints alongside talent exigencies, industries unfailingly probe novel methodologies and architectures. Noticeably, interfacing high-level programming lexicons (viz. Python, Java, C++, etc.) across testing radiates burgeoning efficacy given:

Broader Talent Foundation and Ecosystem: Juxtaposed with legacy hardware scripting vernaculars, upscale languages flaunt grander developer collectives, wealthier learning canons alongside mature software stacks (ecosystems) lowering acclimatization thresholds fetching multifaceted backgrounds toward testing arenas. Academics equally opine that seducing software architects into hardware spheres maneuvers critical post-Moore epoch challenges.

Mutualities with Software Testing Practices: Albeit domains diverge, semiconductor functional reviews and software tests overlap robustly tracking goals (unearthing anomalies), workflows (planning, scripting, bug supervisions), parametrics (coverages) along with incubators (frequently software emulators). Elite lexicons intertwined via testing skeletons (e.g., pytest) organically merge premier software heuristics (viz., unit checks, continuous integrations, automated tests)—boosting throughput and procedural rigors.

Amplifying Abstraction Tiers and Developmental Throughputs: Uppertier lexicons habitually furnish stouter abstraction modalities coupled with streamlined syntactic framing enabling validation agents explicit alignment upon testing rationales sans low-lying schematic entanglement (notwithstanding interfaces relying upon bridges such as Picker) progressively inflating platform construction cadences alongside maintainability.

Capitalizing fundamentally upon these boons positions elevated-language validation interventions pivotal at dampening threshold barricades, spiking efficiencies thus catapulting crowdsourced test paradigms forward.

💡Picker epitomizes crucial engineering driving this precise intent translating RTL outlines across multifaceted high-layer boundaries (like Python, C++, Java) authorizing creators to champion hardware validation leveraging natively accustomed verbiage.

8. Chip Verification Crowdsourcing - The Future Solution

Bracing staggering verification encumbrances entwined around talent dearths, silicon test crowdsourcing engineers idiosyncratic avenues. Up next introduces the construct, viability intertwined with orchestrational logistics detailing how crowdsourcing metamorphoses conventional validation templates.

Feasibility of Chip Verification Crowdsourcing

Forgoing innumerable challenges surrounding crowdsourcing schemas, technologically charting crowdsourced reviews radiates viability fundamentally rooted down:

Ascension of Open-Source Silicon Blueprints: Burgeoning transparent microarchitecture trajectories (e.g., XiangShan) bequeath decipherable designs cultivating pragmatic case-studies enriching educational validations.

Propulsion of Open-Source Test Synthesizers: Apparatuses like Verilator, GTKWave precipitate consequential verifications independent of commercial dependencies.

Referencing Established Crowdsourcing Ecosystems: Archetypes mirroring Linux kernel frameworks alongside ImageNet annotative cohorts codify crowdsourcing functionalism traversing baroque technical directives.

Deployment of Encryption Modalities: Encompassing commercial blueprints leverages exceptional encryptions camouflaging scripts contemporaneously executing validations (whereby Picker seamlessly transfigures architectures addressing binaries thwarting leakage vulnerabilities).

Technical Route for Chip Verification Crowdsourcing

Fueling silicon verification crowdsourcing progress predicates mapping encompassing developmental itineraries:

Polyglot Validation Skeletons: Fashion tools characterizing Picker assenting testing participants operational access via conversant lexicon paradigms (Python, Java, C++ or Go)—trimming onboarding impedances.

Unrestricted Learning Repositories: Supplying exhaustive, sequential online tutelage licensing omnipresent hardware test autodidacticism.

Genuine Validation Exhibits: Anchoring via clear processing substrates (viz. “XiangShan Kunminghu” RISC-V units) issuing operational testing templates entitling scholars hands-on integration.

Crowdsourcing Validation Platform: Birthing consolidated testing portals intertwining silicon blueprint titans traversing validation virtuosos organizing test task dispatches coupled with management.

🚀 Future Prospects: Our vision decrees “Unsealing customary testing blackboxes ushering impassioned participants boundary-less hardware validations fueled via fluent programming proficiencies”. Phenomenally augmenting validation talent tributaries clipping chip review tariffs coalescing swift computational permutations.

9. Summary

Through this primer, we acquired underlying validation cognitions enveloping:

Silicon testing nomenclature matched with crucial relevance amid processing outlines.

Unabridged chip checking pipelines sweeping initial plans toward final reporting.

Divergent chip verification tiers tracking unit checkings peaking into system screenings.

Yardsticks assaying testing statures particularly leaning on coverage indicators.

Dominant stalemates challenging existing silicon inspections.

Prospects championing silicon verification crowdsourcing charting futurity permutations.

Chip validations act as cardinal cogs assuring chip stature whilst ranking amidst the loftiest time/cost draining channels. Ascertaining validation wisdom isn’t just about maturing into exemplary testing engineers but fundamentally advancing chip assessment paradigms.

Pledging inside semiconductor testing crowdsourcing grants application leverages concurrently uplifting global integration across technological channels. Indifferent whether university candidates, software designers, alongside structural aficionados globally retain ingress navigating this exhilarating plane.

Into ensuing modules, we extrapolate Picker integrations plotting scaffold initiations supplemented via elementary test script authoring.

10. Prerequisite Knowledge for Subsequent Courses

Enabling frictionless downstream scholarship necessitates guaranteeing familiarization traversing ensuing fundamentals:

Linux Basics

Elemental Linux semantics coupled with ecosystem tailoring

Harnessing Git

gcc coupled with routine binary utilities: Concentrating upon originating compliational structuring

Viable referencing encompasses The Missing Semester of Your CS Education

Python Basics

Python integrations, locale structuring partnered with

piporchestrationsBaseline Python

Elemental Python Object-Oriented Frameworks

Python coroutine segments (

asyncio)

Viable referencing encompasses Liao Xuefeng’s Python Tutorial:

4

5

6

7.1~7.4

8.2~8.4

9

10

23.1~23.2

Prior details underline computational scaffolds mapping procedural languages encompassing the syllabus.

Digital Circuit Basics

Apprehending digital circuitry operates as core groundwork navigating chip checks. Assisting engineers lucidly parsing blueprint logic bridging requisite protocols efficiently unraveling temporal frictions coupled alongside systemic soundness targeting sweeping vulnerability diagnoses thereby composing omni-scenario test formulations. Veritably, circuitry intellect crisscrosses entirety pipelines securing overarching functionality durabilities.

Consequently impelling us mastering:

Verilog Fundamentals

- Suggested Video: Initiating VerilogHDL Via Schematics Perspective

Chisel Frameworks

Chisel navigations persist deferrable preceding oncoming NutShell Cache empirical frameworks. Irrespective, consecutive examples assimilate modules authored inside Verilog scopes.

References:

Chip Verification (Open Verification Platform Learning Resources)

Digital Circuits (Open Verification Platform Learning Resources)

Why Need, Why Feasible For Open-Source Divisible Verifications

Chip Verification Roaming Guide ―― From Systematic Theory towards UVM Holistics (Liu Bin)